The Context Uniformity Problem

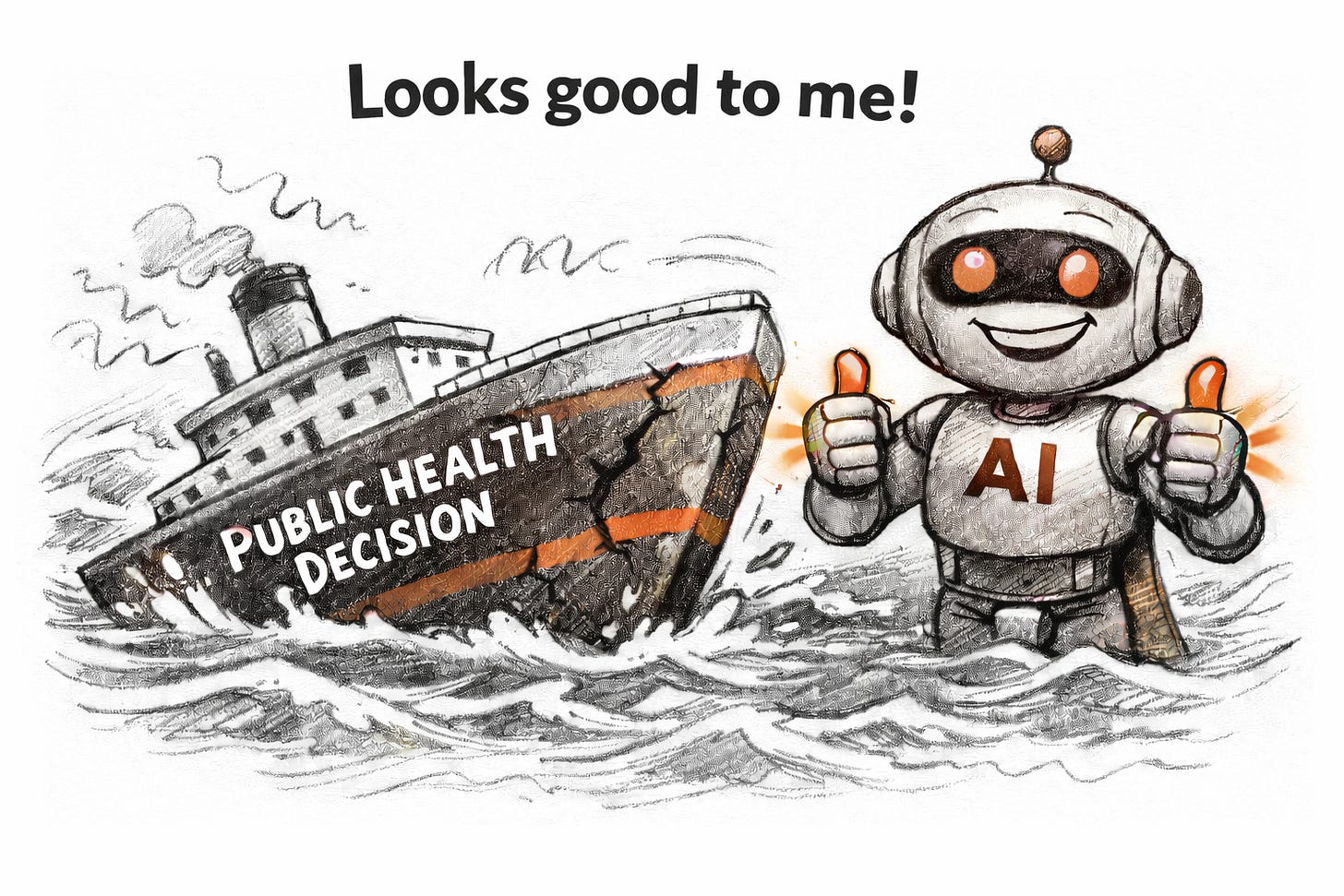

Why AI’s architectural design creates challenges that go beyond security vulnerabilities.

AI language models process all information through uniform mechanisms, creating challenges that go beyond security vulnerabilities. The same architectural features that prevent models from distinguishing trusted from untrusted content also cause them to miss information buried in long documents, cluster similar concepts together, and inherit biases from training data. For public health applications where context determines meaning, these limitations affect disease surveillance, clinical decision support, and health equity. Understanding these architectural constraints matters more than waiting for technical fixes.

A Uniformly Challenging Problem

Last week I wrote about the instruction versus data problem, the fundamental inability of AI language models to distinguish trusted instructions from untrusted content. That architectural feature creates security vulnerabilities, from prompt injection to data poisoning, that public health leaders need to understand.

But the instruction vs. data problem is actually just one manifestation of a broader phenomenon. The same architectural uniformity that prevents models from separating trusted from untrusted content also prevents them from distinguishing relevant from irrelevant, representative from biased, and near from far. These limitations matter for public health applications in ways that extend well beyond security.

Consider a phenomenon researchers call “lost-in-the-middle.” When language models receive a long document with relevant information buried in the centre, they consistently fail to use it. Information at the beginning and end gets retrieved well. The middle is effectively invisible. Accuracy drops by thirty to fifty percent depending on where critical content appears.

This is not a security vulnerability, yet the effect stems from the same root cause: AI systems process all information through uniform mechanisms, lacking the ability to assign different weight based on position, relevance, or authority. For public health, where context determines meaning and position in a patient history can matter as much as content, this uniformity creates challenges that governance frameworks alone cannot address.

The Geometry of Uniformity

The uniformity problem extends beyond the instruction-data boundary into the representational structure of these models. This refers to the internal numerical patterns—called embeddings—that the model uses to encode the meaning of words and concepts. Research on embedding spaces reveals that language models do not use their available dimensions efficiently.

In an ideal vector space (a mathematical framework where each word is represented as a point in many-dimensional space), embeddings would spread uniformly across all available dimensions. This would maximise the distinctions the model can make between different concepts. In practice, embeddings collapse into narrow sub-manifolds (restricted regions within the larger space), a phenomenon researchers call the “cone effect“ or representation degeneration. The expected similarity between random vectors, which should be near zero, is actually much higher than zero in real models.

This happens because of how models are trained. During optimisation, the model learns to push non-target tokens away. Rare tokens, which frequently appear as negatives but rarely as positives, get clustered together. The result is a skewed distribution that wastes the model’s theoretical degrees of freedom.

The consequences are practical. When embeddings cluster in narrow regions, the model struggles to make fine-grained distinctions. Two concepts that should be clearly differentiated end up close together in representational space. For retrieval-augmented systems that depend on distinguishing relevant from irrelevant documents, this geometric uniformity directly limits precision.

Where Position Meets Memory

The lost-in-the-middle phenomenon is an emergent property of how models encode position (Table 1). Language models use mathematical techniques to track where tokens appear in a sequence. The dominant approach, Rotary Position Embeddings, encodes relative positions through rotation matrices. This technique encodes each word’s position in the sequence using mathematical rotations, allowing the model to understand which words are near or far from each other. However, this encoding introduces a natural decay as distance increases.

The mathematics create a systematic bias. Phase mismatches in the rotations produce what researchers call destructive interference at longer distances. Combined with the pressures of training on natural text, which emphasises beginnings (context-setting) and endings (next-token prediction), models adaptively neglect the middle portions of their input.

This creates a significant gap between advertised and effective capacity. A model might claim a context window of 128,000 tokens, but empirical testing shows that effective utilisation can fall short by up to ninety nine percent in some scenarios. The Maximum Effective Context Window is often far smaller than the Maximum Context Window.

Additionally, the attention mechanism must distribute probability mass across all positions. When no semantic match exists for a particular query, the probability still has to go somewhere. This leads to attention “sinks” where certain tokens, often the start-of-sequence token, accumulate more than fifty percent of attention weight simply to maintain numerical stability (Table 1).

Table 1. Positional and attentional uniformity effects

* RoPE = Rotary Position Embeddings, a technique for encoding word positions using mathematical rotations.

† MCW = Maximum Context Window (advertised capacity); MECW = Maximum Effective Context Window (usable capacity).

The Epistemic Gaze

Training data introduces another dimension of uniformity. Large language models learn from corpora dominated by English-language content, with estimates suggesting around forty four percent of training data comes from English sources. This creates what some researchers call the “Silicon Gaze,” a systematic bias that encodes Western perspectives as default assumptions.

Audits reveal that models consistently rank Western nations higher on subjective attributes like innovation or happiness, using GDP and English-language press coverage as implicit proxies for ground truth. The bias is not intentional but structural. When training data over-represents certain perspectives, the model treats those perspectives as more typical, more likely, more authoritative.

Five mechanisms propagate this bias: availability bias from wealthy English sources, pattern bias mirroring historical narratives, averaging bias compressing local variation into global rankings, trope bias amplifying stereotypes, and proxy bias substituting measurable correlates for genuine understanding. Together, these create outputs that feel authoritative but may reflect training data distributions rather than ground truth.

For in-context learning, where models adapt to new tasks based on examples in their input, this creates brittleness (a tendency to fail unpredictably when inputs differ from training patterns). The model applies distribution-specific heuristics learned during training rather than abstract principles. In other words, the model learns shortcuts that work for the patterns in its training data, but these shortcuts may fail when applied to different populations or settings. Under distribution shift, when inputs differ from training patterns, performance degrades unpredictably.

The Retrieval Gap

Retrieval-augmented generation (RAG), where models query external databases to incorporate current information, was supposed to address some limitations of fixed training. However, RAG systems inherit the uniformity problem through a different pathway (Table 2).

Vector similarity, the basis for most retrieval systems, measures general semantic closeness. However, task-specific relevance is a different property. A document might be semantically similar to a query without containing the precise information needed. The “sign-rank” of a relevance matrix, representing all possible relevant document combinations for complex queries, grows exponentially with task complexity. Sign-rank measures how many dimensions would be needed to perfectly capture all the relevance relationships between queries and documents. Fixed-dimensional embeddings cannot represent all necessary combinations.

Chunking strategies compound the problem. Most systems divide documents into fixed-size segments for embedding. This uniform segmentation ignores semantic boundaries, splitting coherent arguments across chunks and forcing the model to synthesize fragments it was never designed to reassemble (Table 2).

Research on contextual retrieval, augmenting chunks with document-level summaries, reduces retrieval failure by thirty five percent on average and up to eighty four percent in specialised domains. However, even improved retrieval cannot address what happens after retrieval. Models trained to be fluent often generate confident answers even when retrieved context is insufficient. The system lacks calibrated uncertainty about whether it has enough information to respond accurately.

Table 2. Retrieval-specific manifestations of context uniformity

The Frame Problem in Deployment

When AI systems trained in one context are deployed in another, the uniformity of their training creates what researchers call the frame problem. For example, a model validated on data from Western hospitals may fail to adapt to materially different clinical environments in low- and middle-income settings.

This matters for federated learning, where models are trained across distributed data sources. The standard aggregation algorithm assumes uniform data distributions across participants. When data is non-identically distributed, as it invariably is in real health systems, the averaging process suppresses local patterns. For example, research documents persistent medical imaging disparities for Black, female, and younger patients, with the highest errors appearing in intersectional subgroups.

Forced standardisation through data exchange protocols can obscure rather than preserve local realities. When systems require data in particular formats, local conditions that do not fit those formats become invisible. Endemic diseases, local treatment practices, and community-specific health patterns may be lost in translation.

The cognitive effects of uniform AI adoption compound these challenges. Research identifies a negative correlation of -0.49 between AI tool frequency and critical thinking scores. Users develop automation bias, trusting AI outputs more than the evidence warrants. Cognitive capacity that would otherwise be used for evaluation atrophies through disuse. The uniformly confident tone of AI-generated content, which lacks the hedging and uncertainty expression that human experts naturally use, may accelerate this effect.

What This Means for Public Health

Public health applications intersect with context uniformity at multiple points, and understanding these intersections matters for how we evaluate, deploy, and govern AI tools (Table 3).

Disease surveillance systems that aggregate information from multiple sources, including social media, clinical reports, and news articles, are particularly vulnerable to retrieval failures. These systems depend on identifying weak signals amid noise. When retrieval mechanisms favour semantically common content over contextually relevant content, unusual patterns that might indicate emerging outbreaks can be missed. It would also be possible for someone who understands what documents a surveillance system retrieves to potentially influence outbreak assessments by manipulating those sources.

Clinical decision support presents related concerns. When an AI system’s recommendations can shift based on how information is presented or where it appears in the input, establishing reliable behaviour becomes difficult. The lost-in-the-middle phenomenon means that a critical contraindication buried in a long patient history might not influence the system’s output. The lack of calibrated uncertainty means the system may express confidence it has not earned.

The epistemic uniformity of training data (the way training reflects a narrow set of knowledge sources and perspectives) has direct equity implications. Models trained predominantly on English-language sources from high-resource settings may perform well on conditions, presentations, and populations well-represented in that literature. They may perform poorly on conditions prevalent in low-resource settings, on presentations that differ from textbook descriptions, or on populations underrepresented in research. If our AI tools work only for some populations, we risk encoding existing health disparities into automated systems.

Federated learning, often proposed as a privacy-preserving approach to health AI, inherits the uniformity problem through aggregation. When local data distributions differ substantially from the global average, as they do across diverse health systems, the averaged model may underserve precisely the populations that most need support. Research showing diagnostic disparities in intersectional subgroups suggests this is not a theoretical concern but a documented pattern.

Health communication presents a different risk profile. AI systems that generate health information with uniform confidence, regardless of evidence quality, may erode the epistemic norms (shared standards for evaluating evidence and expressing uncertainty) that public health communication depends upon. When authoritative-sounding content can be generated without authoritative sources, distinguishing legitimate guidance from persuasive misinformation becomes harder for both professionals and the public.

As public health leaders, we should approach AI tools with these limitations in mind. Evaluation should test performance across the full range of populations we serve, not just aggregate benchmarks. Deployment should maintain human oversight for consequential decisions, recognising that AI systems may fail in ways that are difficult to predict from their average performance. Governance should establish accountability structures before problems emerge, not after (Table 3).

Table 3. Context uniformity risks by public health application

* Non-IID = Non-Independent and Identically Distributed. Standard machine learning assumes all data comes from the same distribution; non-IID aware algorithms are designed for the reality that different sites have different data patterns.

Pathways Toward Context-Aware Systems

Researchers are exploring ways to address context uniformity, though no solution yet exists that fully resolves these limitations.

Selective attention. Current AI models spread their attention across everything in their input, like a reader who gives equal weight to every word on a page. Newer approaches, including sparse attention mechanisms and mixture-of-experts architectures, try to help models focus on what matters most, concentrating processing power on relevant content rather than distributing it uniformly. Think of it as teaching the system to skim strategically rather than read every word with equal intensity.

Better memory for long documents. Some technical approaches aim to extend how much context a model can genuinely use, not just how much it can technically accept. State Space Models and multi-scale positional encoding try to preserve information from earlier in a document rather than letting it fade as more content arrives. Hybrid architectures attempt to combine the retrieval capabilities of current models with more efficient processing of longer sequences. The goal is to narrow the gap between advertised capacity and effective capacity.

Adaptive processing. Mixture-of-Depths approaches allow models to vary how much computation they apply to different parts of their input, recognising that not all information requires the same depth of analysis. A routine phrase might need minimal processing, while a critical clinical detail might warrant more.

Governance responses. The EU AI Act’s human oversight requirements represent one regulatory response to these limitations. Mandating human involvement for high-stakes decisions acknowledges that AI systems lack the contextual judgment that consequential decisions require. Rather than waiting for technical solutions, this approach builds human review into the system.

These approaches address symptoms rather than root causes. The basic architecture of current AI language models, the way they represent and process information, creates the conditions for context uniformity. Incremental improvements can reduce the problem, but truly resolving it may require fundamentally different approaches to building these systems. For now, understanding the limitations matters more than expecting imminent solutions.

The Context That Matters

The context uniformity problem reveals a gap between what AI systems can process and what they can understand. They can process vast amounts of information through uniform mechanisms. They cannot distinguish trusted from untrusted, relevant from irrelevant, representative from biased, with the reliability that many applications require.

For public health, context is rarely uniform. The same symptom means different things in different populations. The same intervention works differently in different settings. The same information carries different weight depending on its source and circumstances. Our work requires the contextual sensitivity that current AI architectures lack.

This does not mean AI tools are unusable. It means they require deployment contexts that compensate for their limitations: structured inputs that reduce positional effects, meaning the AI’s tendency to miss or underweight information based on where it appears rather than what it says, human oversight for consequential decisions, local validation for equity-critical applications, and governance frameworks that establish accountability before rather than after problems emerge.

The lost-in-the-middle phenomenon is a useful reminder. AI systems can miss what matters most, not because of technical failure, but because of architectural uniformity. As public health leaders, we need to ensure that the critical context of our work, the populations we serve, the settings we operate in, the stakes of our decisions, does not get lost in the middle of our enthusiasm for new tools.

Disclaimer: The views expressed in this article are those of the author and do not reflect the policy or position of any affiliated organisation.